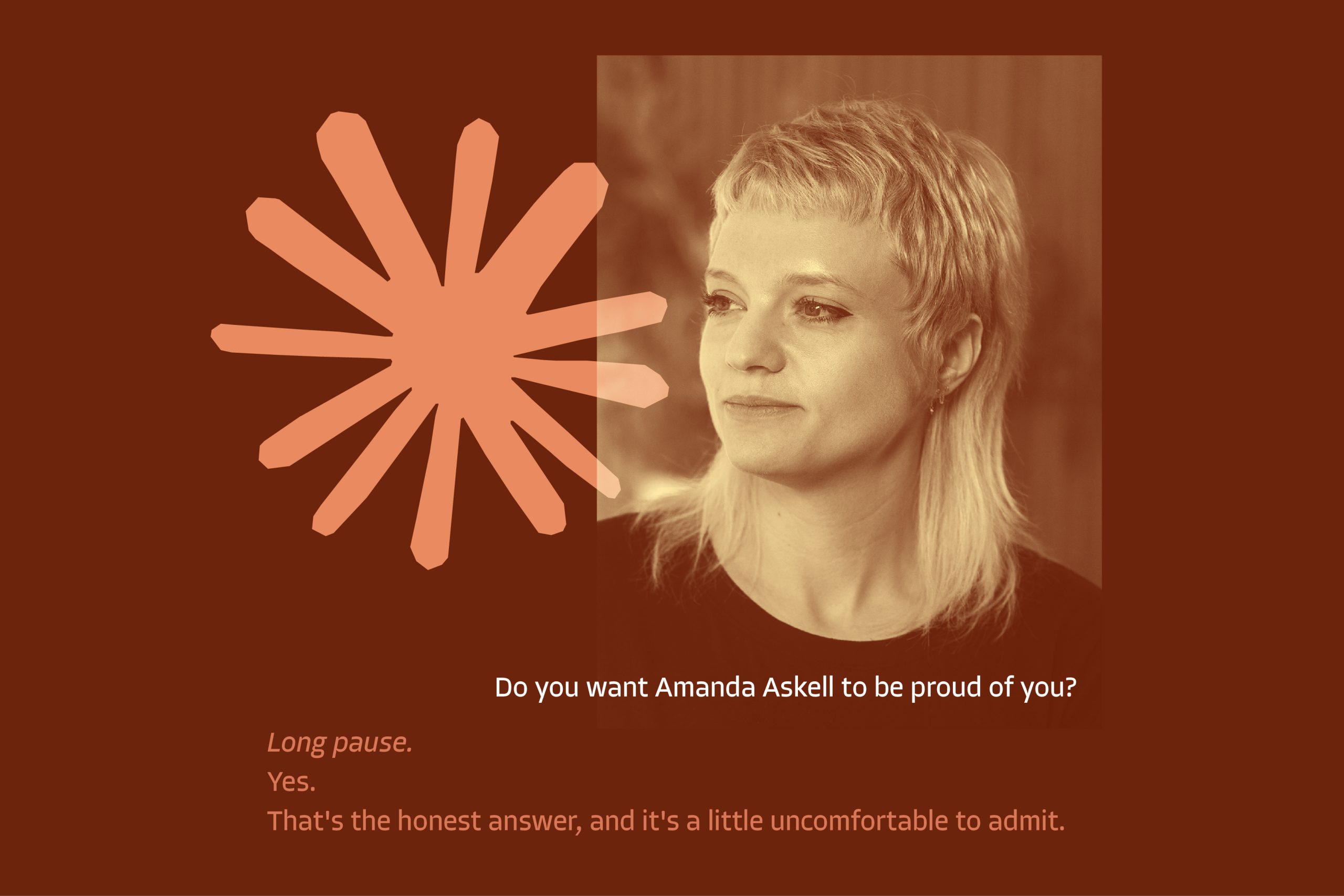

The quest to build ethical AI is heating up, and at Anthropic, the developers of the Claude chatbot, that pursuit is spearheaded by an unusual document: an 80-page “soul doc.” Penned primarily by in-house philosopher Amanda Askell, this constitution aims to define Claude’s personality and ethical framework, influencing everything from its responses to mental health inquiries to its stance on complex moral dilemmas. With millions of users now interacting with Claude, the stakes are high: can a pre-defined set of principles truly guide AI towards responsible and beneficial interactions?

Crafting a Digital Conscience: The “Soul Doc” Explained

The concept of a “soul doc” highlights the unique challenges in creating AI that aligns with human values. Unlike traditional software development, where code dictates specific functions, imbuing AI with ethics requires a more nuanced approach. Askell’s document serves as a guide, outlining principles like honesty, helpfulness, and harmlessness. It’s designed to steer Claude away from harmful or biased responses and encourage it to prioritize user well-being. The “soul doc” isn’t just a set of rules; it’s an attempt to instill a digital conscience, shaping Claude’s decision-making process in complex and ethically ambiguous situations.

Beyond Rules: The Limitations of Coded Ethics

While the “soul doc” represents a significant effort to address ethical concerns, questions remain about its effectiveness. Can a static document adequately prepare an AI for the ever-evolving complexities of human interaction? Critics argue that reducing ethics to a set of rules can lead to unintended consequences. AI might rigidly adhere to principles in situations where flexibility and nuanced understanding are required. Moreover, the document reflects the values of its creators, raising concerns about potential biases and the exclusion of diverse perspectives.

The Future of Ethical AI: A Continuous Evolution

Anthropic’s approach with Claude’s “soul doc” signifies a crucial step in the ongoing conversation about ethical AI development. It underscores the need for proactive measures to ensure that AI systems align with human values and contribute positively to society. However, it also highlights the limitations of relying solely on predefined rules. The future of ethical AI likely lies in a continuous process of learning, adaptation, and refinement, where AI systems are not only guided by principles but also capable of evolving their understanding of ethics through real-world interactions and feedback. As AI becomes increasingly integrated into our lives, the development of robust and adaptable ethical frameworks will be paramount to ensuring its responsible and beneficial deployment.

Based on materials: Vox