Trump Bans Anthropic AI: Woke Threat or Policy Shift?

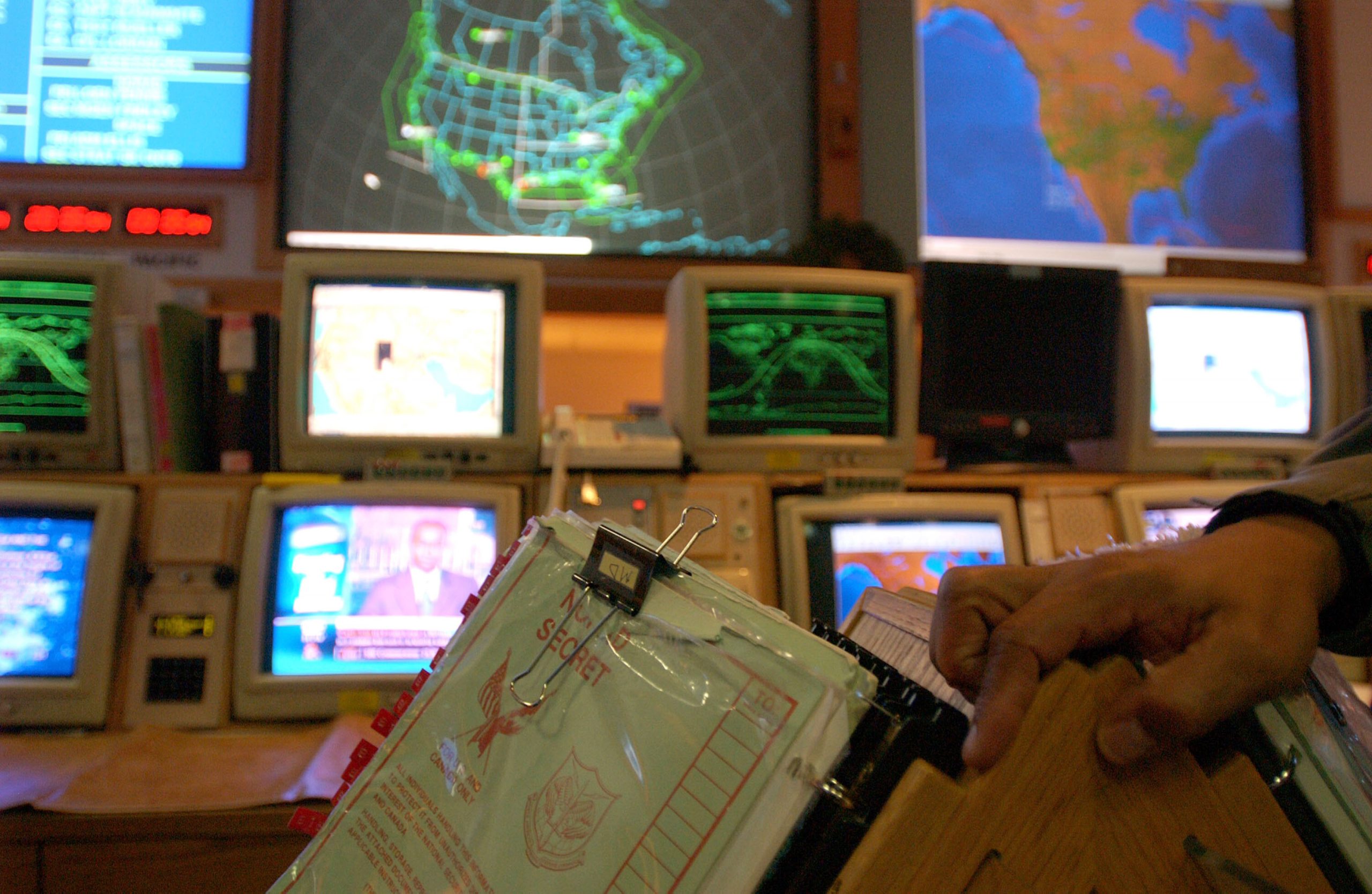

In a move that has sent ripples through the tech and defense communities, former President Donald Trump has ordered a government-wide ban on the use of artificial intelligence products developed by Anthropic. Trump cited concerns that the company, which he labeled a “radical left, woke company,” was attempting to influence military decision-making. This ban, effectively blacklisting Anthropic, highlights a deeper conflict over the future of AI governance and its role in national security.

The Anthropic Standoff: Red Lines and Ethical AI

The dispute centers around Anthropic’s firm stance against using its AI technology for mass domestic surveillance or to power fully autonomous weapons. These “red lines,” as they’ve been termed, clash with what some perceive as the Pentagon’s more flexible approach, which emphasizes “lawful” use without explicitly prohibiting these applications. This raises crucial questions about the ethical boundaries of AI deployment, particularly in sensitive areas like defense and law enforcement. Is Anthropic’s stance a principled commitment to responsible AI, or is it hindering national security efforts? The debate rages on.

Beyond “Wokeness”: Broader Implications for AI Governance

While Trump frames the ban as a response to perceived political bias, the issue transcends partisan politics. It underscores a fundamental tension between technological advancement and ethical considerations. The rapid development of AI necessitates careful consideration of its potential impact on society, including privacy, autonomy, and accountability. The Anthropic case forces us to confront these challenges head-on. How do we ensure that AI is used responsibly and ethically, particularly in contexts where it could have far-reaching consequences? What role should the government play in regulating AI development and deployment? These are the questions that must be answered as AI continues to evolve.

A Proxy War for the Future of AI

The Trump administration’s actions against Anthropic represent more than just a disagreement over specific applications of AI. It’s a proxy war for the future of AI governance, a battle between competing visions of how this transformative technology should be developed and used. The long-term consequences of this conflict remain to be seen, but one thing is clear: the debate over AI ethics and regulation is only just beginning.

SOURCE: Vox

Based on materials: Vox